Hallucinations are not rare edge cases.

They are default behavior without control.

Most teams try to fix outputs.

Strong teams fix the system.

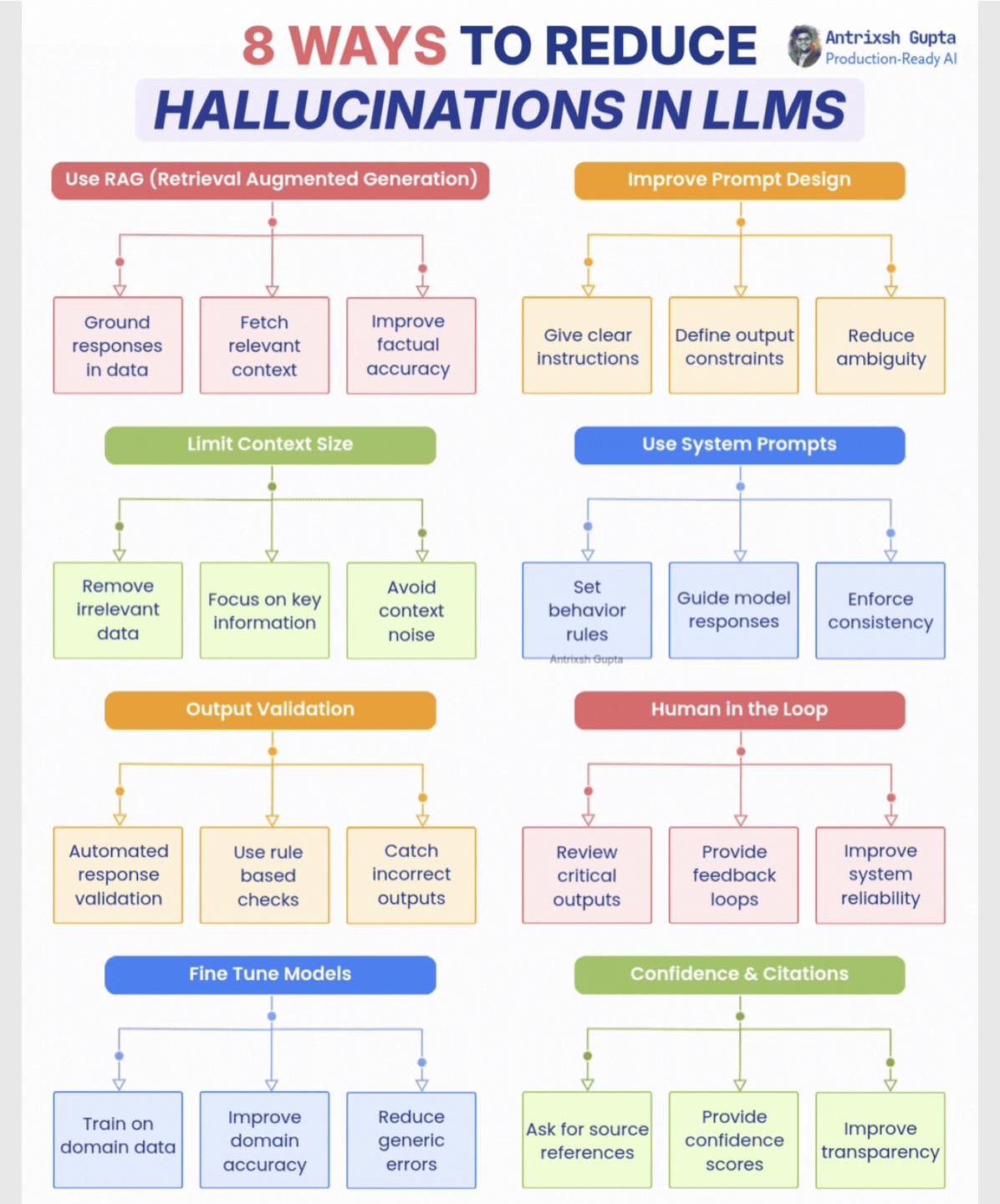

𝐈𝐧 𝐭𝐡𝐢𝐬 𝐢𝐧𝐟𝐨𝐠𝐫𝐚𝐩𝐡𝐢𝐜 𝐈 𝐛𝐫𝐞𝐚𝐤 𝐝𝐨𝐰𝐧 8 𝐰𝐚𝐲𝐬 𝐭𝐨 𝐫𝐞𝐝𝐮𝐜𝐞 𝐡𝐚𝐥𝐥𝐮𝐜𝐢𝐧𝐚𝐭𝐢𝐨𝐧𝐬:

• Use RAG (Retrieval Augmented Generation)

• Improve Prompt Design

• Limit Context Size

• Use System Prompts

• Output Validation

• Human in the Loop

• Fine Tune Models

• Confidence & Citations

𝐄𝐚𝐜𝐡 𝐦𝐞𝐭𝐡𝐨𝐝 𝐚𝐝𝐝𝐬 𝐚 𝐥𝐚𝐲𝐞𝐫 𝐨𝐟 𝐜𝐨𝐧𝐭𝐫𝐨𝐥.

→ RAG grounds responses in real data.

→ Prompt design reduces ambiguity in outputs.

→ Context limits remove noise and confusion.

→ System prompts enforce consistent behavior.

→ Output validation catches incorrect responses.

→ Human in the loop adds oversight for critical tasks.

→ Fine tuning improves domain-specific accuracy.

→ Confidence and citations increase transparency.

No single method eliminates hallucinations.

It is a layered defense problem.

The best systems combine multiple controls.

That is how you move from demos to trust.

P.S. Which approach has worked best for reducing hallucinations in your system?

Follow us for more insights

#LLM #AIEngineering #AgenticAI #RAG #TechTips #code231 #fblifestyle

8 WAYS TO REDUCE HALLUCINATIONS IN LLMS